In today’s fast-paced data-driven world, achieving optimal performance in your data pipelines is critical to ensuring smooth data flows, faster insights, and improved operational efficiency. That’s where TD pipeline optimization becomes essential. Let’s explore seven essential strategies to maximize your TD pipeline performance and why they’re so vital.

Understanding TD Pipeline Development

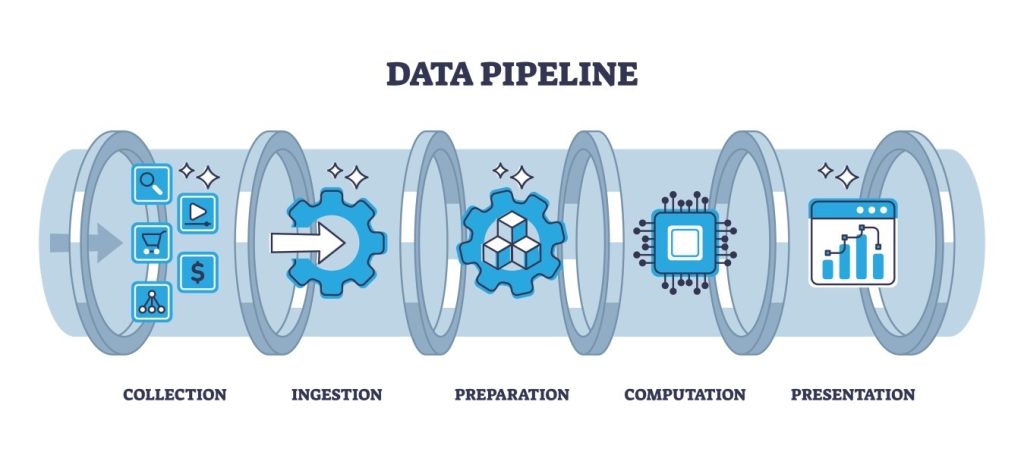

Before diving into optimization, let’s clarify what TD pipeline development involves. TD pipeline development is the process of designing, building, and managing data pipelines that efficiently process, transform, and deliver data from one system to another. This encompasses everything from data ingestion to transformation, storage, and delivery. In short, it’s the backbone of modern data-driven decision-making.

1. Leverage Parallelism for TD Pipeline Optimization

One of the most essential aspects of TD pipeline optimization is leveraging parallelism. By breaking data into chunks and processing them simultaneously, you can dramatically reduce data processing times. Tools like Apache Spark and Dask are invaluable for this, allowing for efficient distributed processing. This speeds up data transformations, ensuring data insights reach decision-makers faster.

2. Streamline Data Transformation Logic

Complex transformation logic can slow down your TD pipeline. Optimize your transformations by eliminating redundant steps and using efficient data structures. For example, vectorized operations (like those in pandas or NumPy) often outperform row-by-row processing. Efficient data transformation is a core component of TD pipeline optimization, ensuring data moves quickly and smoothly through your pipeline.

3. Optimize Data Partitioning

Data partitioning is essential for TD pipeline optimization. Proper partitioning ensures data is split into manageable segments, reducing I/O overhead and improving query performance. Use intelligent partitioning schemes based on your access patterns and business needs to minimize data shuffling and maximize processing speeds.

4. Implement Robust Error Handling

TD pipelines can encounter failures due to data issues, network disruptions, or resource constraints. Implement robust error handling to capture and handle these errors gracefully. Tools like Airflow or Luigi provide mechanisms for retrying failed tasks or alerting you to issues, reducing downtime and improving pipeline reliability.

5. Monitor and Profile Your TD Pipeline

A crucial yet often overlooked part of TD pipeline optimization is monitoring and profiling. Use observability tools like Prometheus, Grafana, or built-in profiling tools to track pipeline performance in real-time. Monitoring metrics like CPU usage, memory consumption, and throughput can reveal bottlenecks and highlight areas for improvement.

6. Automate Scaling and Resource Management

As your data grows, so does the need for resources. Automation is essential for effective TD pipeline optimization. Implement auto-scaling strategies in your cloud or on-premises environments to dynamically allocate resources based on load. Kubernetes and similar orchestration platforms make this easier, ensuring your pipelines scale seamlessly.

7. Continuously Test and Improve

Optimization isn’t a one-time job—it’s an ongoing process. Continuously test your TD pipeline performance, collect feedback, and refine your pipeline accordingly. Implement CI/CD practices to ensure that any changes you make are tested thoroughly before going live. This iterative approach is the hallmark of effective TD pipeline optimization.

The Role of Software Development in TD Pipeline Optimization

It’s important to recognize that TD pipeline optimization doesn’t exist in isolation—it’s deeply tied to software development practices. Writing modular, reusable, and maintainable code is key. Using software development principles such as version control, unit testing, and continuous integration ensures your data pipelines are reliable and adaptable to changing business needs.

Conclusion: Future-Proof Your Data Pipelines

TD pipeline optimization is essential for modern data-driven businesses. By implementing these seven strategies, you’ll ensure your data pipelines are efficient, reliable, and scalable. As data volumes continue to grow, optimizing your TD pipelines isn’t just a best practice—it’s a competitive necessity.

At aibuzz, we’re passionate about helping businesses harness the power of data through cutting-edge software development and TD pipeline optimization. Our team of experts specializes in building robust software solutions that drive efficiency and innovation. Whether you need custom software to power your pipelines or expert guidance on optimizing your data architecture, we’re here to help you thrive in the data-driven world.

Leave a Reply